Digital Craft > Data & AI

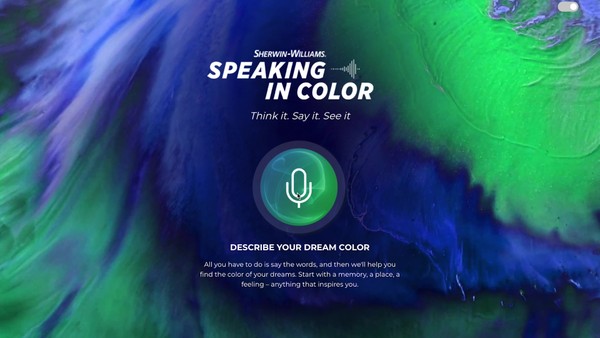

SPEAKING IN COLOR

WUNDERMAN THOMPSON, Minneapolis / SHERWIN-WILLIAMS COIL COATINGS / 2022

Awards:

Overview

Credits

Overview

Describe the creative idea

The perfect color can come from anywhere—a memory, a place or even a dream. We needed to find a new way to bring a color from an architect’s mind into reality. The most natural way to express a thought or feeling is through language. We wanted to encourage architects to describe exactly what inspired them in their own words.

Imagine speaking the words “drinking champagne on the French Riviera at sunset” and in return be provided with a custom color palette that fits your memory. And what if that custom color palette could be fine-tuned to reach the prefect color that exists in your mind? This is what Speaking in Color empowers one of Sherwin-Williams’ most influential audience, architects, to do—with just their voice. This one-of-a-kind color selection solution was conceived leveraging the ultimate combination of the art and science of color making with cutting-edge technology.

Describe the execution

Speaking in Color is a React web app that uses natural language to find the architect’s perfect color. Over the span of eight months, we took Speaking in Color from idea to prototype to proof-of-concept production. Prior to launch, usability testing was done with select Sherwin-Williams architectural experts to inform enhanced functionality development sprints.

August 2021: Concept identified

September 2021: Prototype image analysis to derive primary color

October 2021: Speech recognition added

Jan 2022: Beta standalone react app

Feb 2022: Proprietary code developed to allow Natural Language Processing adjustments

April 2022: Initial production release

We use a combination of 3rd party and integrated proprietary code to deconstruct spoken or typed language into component parts of speech and use the resulting output to run an image query on Google’s programmable search platform. We then use a version of Google’s Vision AI to derive the composite colors of the images returned collectively, and force-rank the most likely colors according to volume in those images. Our custom algorithm then allows users to tweak the returned color in a way that transliterates statements like “make it dimmer,” “add warmth,” or “more like the 1980s” into mathematical RGB adjustments.

We launched this tool in April to an exclusive segment of Sherwin-Williams’ top architect customers. We are actively testing this tool with this audience to inform the future functionality roadmap.

Speaking in Color will be launched to Sherwin-Williams’ full architect audience in early 2023 via an integrated campaign and exclusive digital activations in coordination with their national sales meeting to gain both internal and external excitement. When app usage reaches critical mass, the system will be trained on lexical inputs to get smarter over time and give Sherwin-Williams a dictionary of how its customers talk about color.

More Entries from Data Visualisation in Digital Craft

24 items